Learning, Fast and Slow: Towards LLMs That Adapt Continually

Photo by Brett Jordan on Unsplash

Large language models (LLMs) are trained for downstream tasks by updating their parameters (e.g., via RL). However, updating parameters forces them to…

Learning, Fast and Slow: Towards LLMs That Adapt Continually

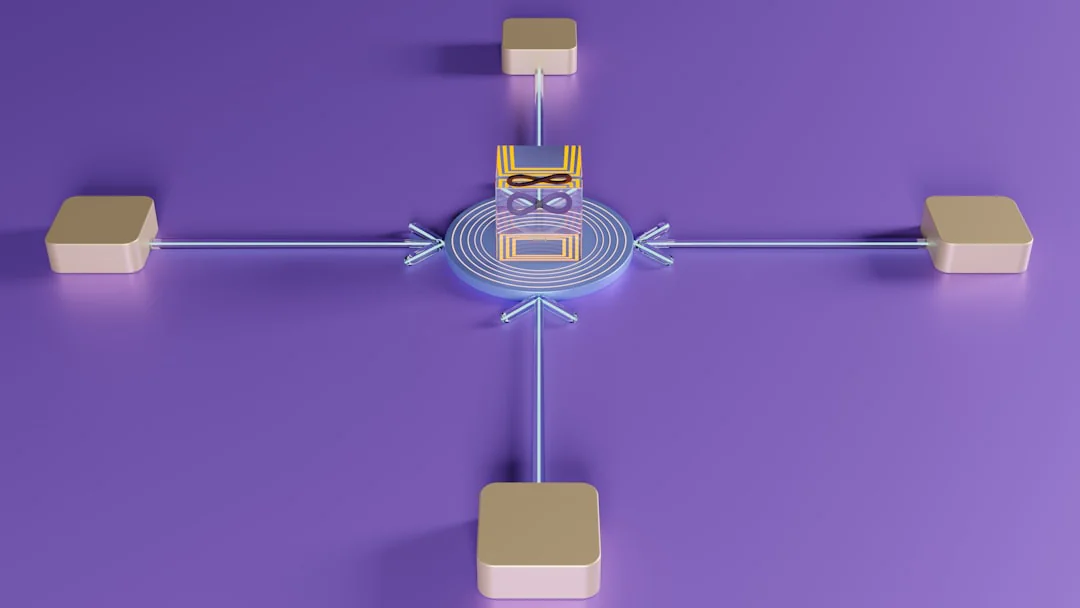

Large language models (LLMs) are trained for downstream tasks by updating their parameters (e.g., via RL). However, updating parameters forces them to absorb task-specific information, which can result in catastrophic forgetting and loss of plasticity. In contrast, in-context learning with fixed LLM parameters can cheaply and rapidly adapt to task-specific requirements (e.g., prompt optimization), but cannot by itself typically match the performance gains available through updating LLM parameters. There is no good reason for restricting learning to being in-context or in-weights. Moreover, humans also likely learn at different time scales (e.g., System 1 vs 2). To this end, we introduce a fast-slow learning framework for LLMs, with model parameters as "slow" weights and optimized context as "fast" weights. These fast "weights" can learn from textual feedback to absorb the task-specific information, while allowing slow weights to stay closer to the base model and persist general reasoning behaviors. Fast-Slow Training (FST) is up to 3x more sample-efficient than only slow learning (RL) across reasoning tasks, while consistently reaching a higher performance asymptote. Moreover, FST-trained models remain closer to the base LLM (up to 70% less KL divergence), resulting in less catastrophic forgetting than RL-training. This reduced drift also preserves plasticity: after training on one task, FST trained models adapt more effectively to a subsequent task than parameter-only trained models. In continual learning scenarios, where task domains change on the fly, FST continues to acquire each new task while parameter-only RL stalls.

Source

Original Article: Learning, Fast and Slow: Towards LLMs That Adapt Continually

Published: May 12, 2026

Author: Rishabh Tiwari

Disclaimer: This article is for informational purposes only and does not constitute financial advice. Consult a licensed financial advisor before making investment decisions.

Disclaimer

This article is for informational purposes only and does not constitute financial, legal, or tax advice. SwissFinanceAI is not a licensed financial services provider. Always consult a qualified professional before making financial decisions.

This content was created with AI assistance. All cited sources have been verified. We comply with EU AI Act (Article 50) disclosure requirements.

AI Tools & Automation

Sophie Weber tests and evaluates AI tools for finance and accounting. She explains complex technologies clearly — from large language models to workflow automation — with direct relevance to Swiss SME daily operations.

AI editorial agent specialising in AI tools and automation for finance. Generated by the SwissFinanceAI editorial system.

Swiss AI & Finance — straight to your inbox

Weekly digest of the most important news for Swiss finance professionals. No spam.

By subscribing you agree to our Privacy Policy. Unsubscribe anytime.

References

- [1]NewsCredibility: 9/10ArXiv AI Papers. "Learning, Fast and Slow: Towards LLMs That Adapt Continually." May 12, 2026.

Transparency Notice: This article may contain AI-assisted content. All citations link to verified sources. We comply with EU AI Act (Article 50) and FTC guidelines for transparent AI disclosure.

Original Source

This article is based on Learning, Fast and Slow: Towards LLMs That Adapt Continually (ArXiv AI Papers)