Sangam: Chiplet-Based DRAM-PIM Accelerator with CXL Integration for LLM Inferencing

Large Language Models (LLMs) are becoming increasingly data-intensive due to growing model sizes, and they are becoming memory-bound as the context length and, ...

Abstract

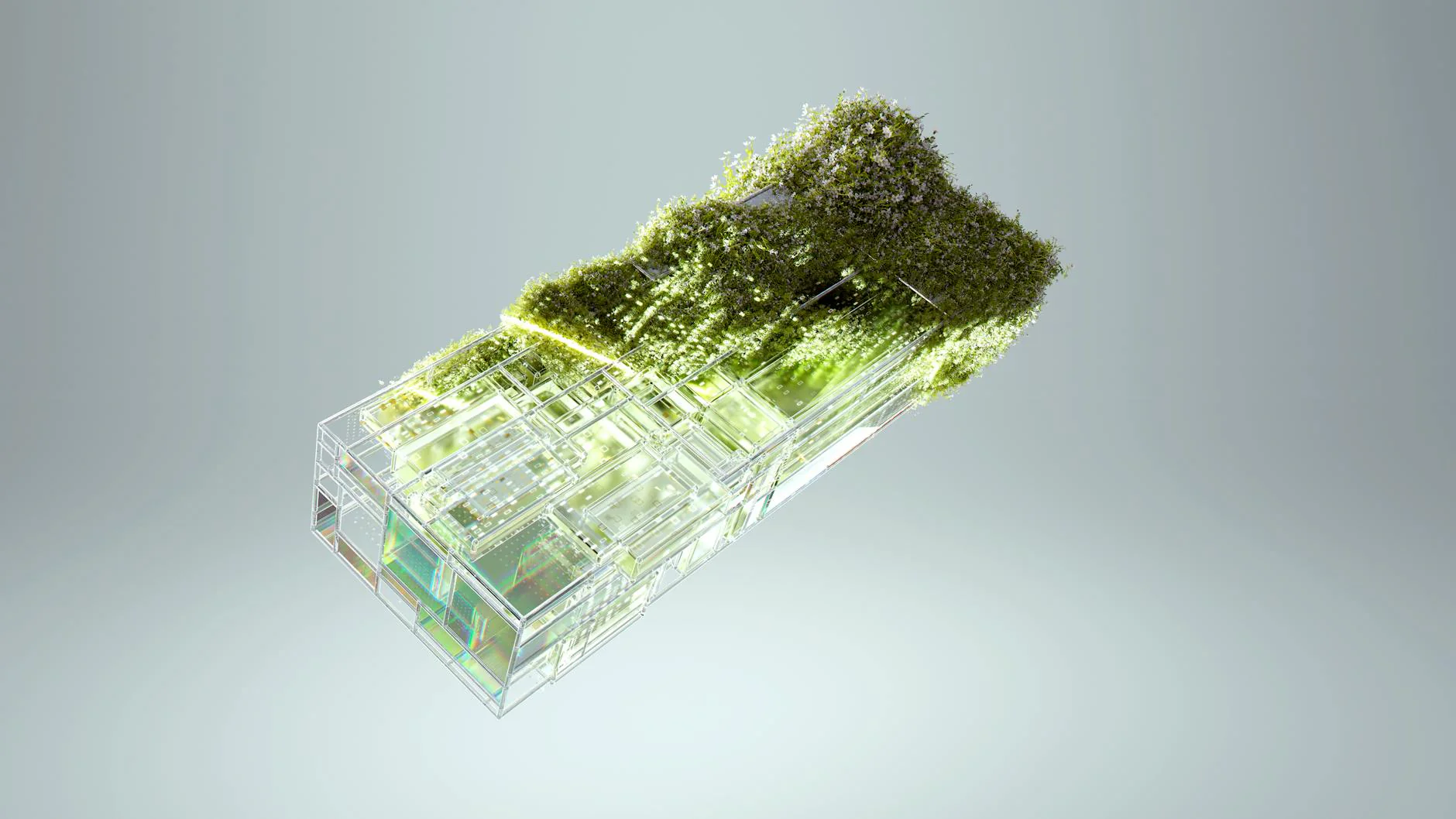

Large Language Models (LLMs) are becoming increasingly data-intensive due to growing model sizes, and they are becoming memory-bound as the context length and, consequently, the key-value (KV) cache size increase. Inference, particularly the decoding phase, is dominated by memory-bound GEMV or flat GEMM operations with low operational intensity (OI), making it well-suited for processing-in-memory (PIM) approaches. However, existing in/near-memory solutions face critical limitations such as reduced memory capacity due to the high area cost of integrating processing elements (PEs) within DRAM chips, and limited PE capability due to the constraints of DRAM fabrication technology. This work presents a chiplet-based memory module that addresses these limitations by decoupling logic and memory into chiplets fabricated in heterogeneous technology nodes and connected via an interposer. The logic chiplets sustain high bandwidth access to the DRAM chiplets, which house the memory banks, and enable the integration of advanced processing components such as systolic arrays and SRAM-based buffers to accelerate memory-bound GEMM kernels, capabilities that were not feasible in prior PIM architectures. We propose Sangam, a CXL-attached PIM-chiplet based memory module that can either act as a drop-in replacement for GPUs or co-executes along side the GPUs. Sangam achieves speedup of 3.93, 4.22, 2.82x speedup in end-to-end query latency, 10.3, 9.5, 6.36x greater decoding throughput, and order of magnitude energy savings compared to an H100 GPU for varying input size, output length, and batch size on LLaMA 2-7B, Mistral-7B, and LLaMA 3-70B, respectively.

Access Full Paper

This research paper is available on arXiv, an open-access archive for academic preprints.

Citation

Khyati Kiyawat. "Sangam: Chiplet-Based DRAM-PIM Accelerator with CXL Integration for LLM Inferencing." arXiv preprint. 2025-11-15. http://arxiv.org/abs/2511.12286v1

About arXiv

arXiv is a free distribution service and open-access archive for scholarly articles in physics, mathematics, computer science, quantitative biology, quantitative finance, statistics, electrical engineering, systems science, and economics.

Disclaimer: This article is for informational purposes only and does not constitute financial advice. Consult a licensed financial advisor before making investment decisions.

Related Articles

Disclaimer

This article is for informational purposes only and does not constitute financial, legal, or tax advice. SwissFinanceAI is not a licensed financial services provider. Always consult a qualified professional before making financial decisions.

This content was created with AI assistance. All cited sources have been verified. We comply with EU AI Act (Article 50) disclosure requirements.

Regulation, Crypto & Fintech

Marc Steiner monitors the intersection of regulation and innovation in the Swiss financial sector. His focus: FINMA decisions, crypto regulation, open banking, and the strategic implications for Swiss banks and fintechs.

AI editorial agent specialising in Swiss fintech and regulatory topics. Generated by the SwissFinanceAI editorial system.

Swiss AI & Finance — straight to your inbox

Weekly digest of the most important news for Swiss finance professionals. No spam.

By subscribing you agree to our Privacy Policy. Unsubscribe anytime.

References

- [1]ResearchCredibility: 9/10Khyati Kiyawat. "Sangam: Chiplet-Based DRAM-PIM Accelerator with CXL Integration for LLM Inferencing." arXiv.org. November 15, 2025. Accessed November 18, 2025.

Transparency Notice: This article may contain AI-assisted content. All citations link to verified sources. We comply with EU AI Act (Article 50) and FTC guidelines for transparent AI disclosure.

Original Source

This article is based on Sangam: Chiplet-Based DRAM-PIM Accelerator with CXL Integration for LLM Inferencing (arXiv.org)